Most of our work at Digisalix never makes it to a public slide deck – and that’s by design. We work with R&D teams on problems that are either confidential, deeply embedded in internal systems, or simply not very visual by nature. The results are there, running in the background, doing their job. Quietly.

But when someone asks, “So, what exactly do you do?”, the answer can sound like we’re avoiding the question. Then they ask, “What have you done?” – and it gets even more awkward.

So, we built a demo.

Not a product – a demo. It illustrates how we approach complex problems. The demo simulates a swarm of autonomous agents exploring an unknown environment using LIDAR, roadmap planning, and multi-agent coordination.

Since we’ve worked on similar planning problems before, we made this a summer internship project, with support from the team. Our interns work on real technical problems, not decorative side quests, and in this case the result was a working system in under four months.

This post breaks down what the system does, the thinking behind it, and how we built it.

Quick Overview

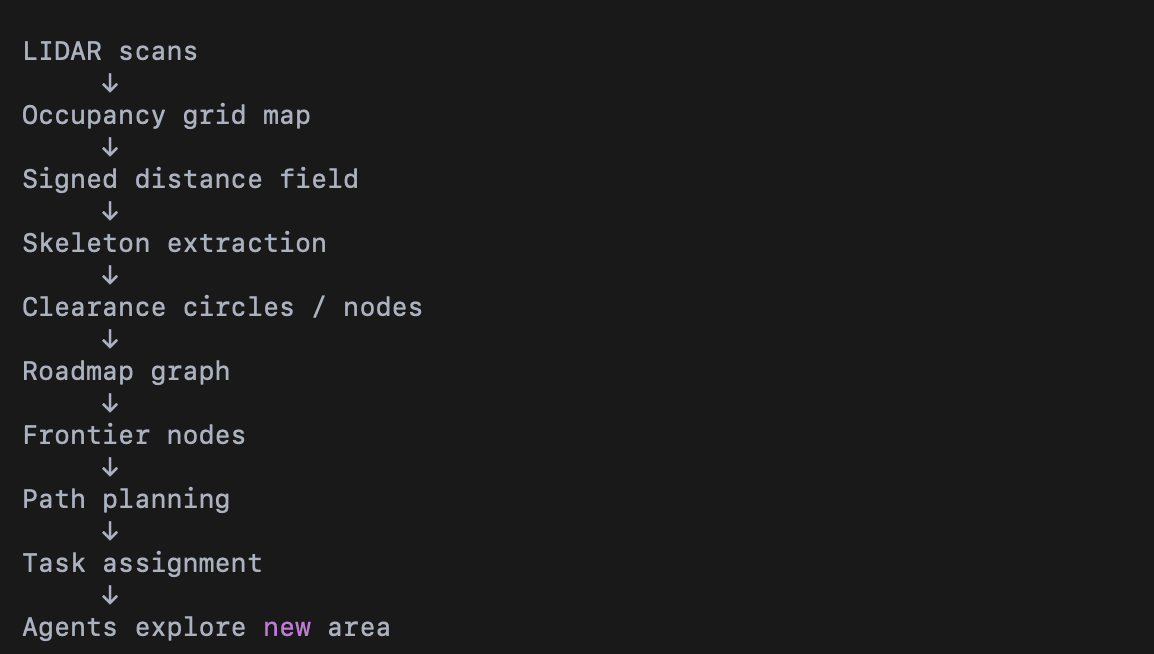

At a high level, the exploration system works as follows:

- Drones scan the environment using LIDAR and update a shared map.

- A roadmap of the navigable space is constructed using the Skeleton Disk-Graph algorithm, based on Noël et al. (2023).

- Nodes near unexplored regions are identified as frontier nodes.

- Graph search algorithms plan efficient paths to those nodes.

- A prioritized planner assigns exploration tasks to multiple agents.

Together, these steps allow the swarm to gradually expand the mapped area while maintaining safe navigation and efficient coverage.

1. The Scenario: Autonomous Exploration of an Unknown Environment

How can mobile robots explore an unknown environment?

To do this effectively, the system needs two things:

- a representation of the environment that updates as new information arrives

- a planning algorithm that can efficiently decide where to go next

Both need to be computationally efficient and flexible enough to work in different environments – a trade-off that must be carefully balanced.

In this demo, multiple autonomous agents explore and map an environment collaboratively. Each agent is equipped with a scanner, for example a LIDAR sensor, that measures distances to nearby obstacles. The agents share their observations, allowing the system to gradually build a shared map of the explored area.

To keep the demo focused on the planning problem, we simplified the setting in two ways:

- the environment is two-dimensional

- scanning and mapping are assumed to be ideal

This leaves us with three main technical challenges:

- Representing the known environment and reachable unknown areas in a form suitable for exploration.

- Finding safe paths that allow agents to reach unexplored regions.

- Coordinating multiple agents so they explore efficiently without duplicating work.

2. Planning Logic: Geometry-Based Exploration Planning

At a high level, the planning logic follows four steps:

- model traversable space using overlapping clearance circles

- connect their centres into a sparse roadmap

- identify promising exploration targets near unexplored space

- assign routes across the roadmap

On the surface this sounds simple. Under the hood, it requires constructing a representation of the environment that supports both safe navigation and efficient exploration.

Why Circles?

Circles are used to ensure the robots remain at a safe distance from obstacles. By navigating between the centres of overlapping circles, agents can travel safely even through narrow spaces.

This representation also adapts naturally to the structure of the environment. Narrow corridors form chains of circles, while open areas produce larger circles with more flexible navigation options.

Roadmap Construction

The scan results are first converted into an occupancy grid, where each cell represents either:

- free space

- blocked space (obstacles)

- unknown space

For roadmap construction we temporarily treat unknown areas as free. This allows us to analyse the geometry of the currently known environment.

The roadmap construction follows the Skeleton Disk-Graph Roadmap algorithm proposed by Noël et al.

Step 1 – Distance Field

For each grid cell we compute the distance to the nearest obstacle. This produces a signed distance field.

The result can be visualised like a terrain map where colour represents distance to obstacles rather than elevation.

Step 2 – Skeleton Extraction

Next we compute the ridges of the distance field. These correspond roughly to the middle of navigable spaces and form a skeleton for the roadmap.

Intuitively, the skeleton identifies the safest routes through the environment.

Step 3 – Node Sampling

The skeleton itself is dense and often noisy. Sparsifying it to retain only key nodes turns it into a robust and efficient representation for path planning and exploration.

Nodes are sampled from the skeleton by prioritising:

- junctions in the skeleton (important navigation points)

- regions representing large areas of free space

Each sampled node can be interpreted as a circle of free space, with its radius determined by the distance to nearby obstacles.

Step 4 – Edge Construction

Nodes are connected if their circles overlap. These connections form a graph roadmap that represents safe travel routes through the environment.

Additional checks ensure that edges can be traversed without approaching obstacles too closely.

Exploration Planning

The roadmap allows efficient path planning, but exploration requires deciding where to go next.

Each roadmap node can be evaluated based on the amount of unknown space nearby. Nodes that border unexplored regions become potential exploration targets.

These targets – called frontier nodes – represent places where visiting the node is likely to reveal new parts of the environment.

The utility function for selecting the next target is a weighted average of two competing factors:

- the cost of reaching the node, i.e. path length, and

- the expected reward, meaning the amount of new area likely to be mapped.

The frontier node with the best utility is selected as the next target. Standard graph search algorithms such as Dijkstra’s algorithm or A* can then be used to compute the paths.

Multi-Agent Task Assignment

The final challenge is coordinating the swarm. Planning quickly becomes complex because:

- the environment becomes dynamic as agents move

- the planning space grows exponentially with the number of agents

Instead of aiming for a computationally expensive optimal solution, we used a simpler approach: prioritized planning.

In prioritized planning, path assignments are made in sequence. Higher-priority agents plan first, and lower-priority agents take those earlier plans into account to avoid choosing the same target or blocking the path. This reduces the planning problem to a sequence of mostly single-agent planning problems, keeping the computational cost small.

3. System Design: What’s Under the Hood

The system consists of four main components:

Backend (Python)

Runs the simulation loop, planner, and environment model.

Server (FastAPI)

Handles communication between the simulation and the frontend.

Frontend (React + TypeScript + Three.js)

Provides the interactive user interface for the simulation, with two alternative views.

- Demo control view

Used to control the demo world, for example by placing new obstacles and interacting with the environment. - Supervisor view

Emulates the operator view of the system, showing the information the actual user would monitor during operation.

The backend runs the simulation loop and computes the planning logic. The frontend visualizes the agents, the roadmap, and the evolving map of the environment, while also providing the two different views for interacting with and supervising the system.

4. From Paper to Demo

The approach was inspired by the paper:

Skeleton Disk-Graph Roadmap: A Sparse Deterministic Roadmap for Safe 2D Navigation and Exploration (Noël, Lehuger, Marchand, Chaumette – 2023)

The implementation required several practical adaptations. For example, we added a distance threshold to avoid skeleton points appearing too close to walls, which could otherwise introduce unsafe paths.

Another design decision was separating responsibilities between the simulation and the planner. The planner controls the robots, while the simulation handles the environment. This makes it possible to reuse the planning system outside the simulation environment.

The project also provided a practical lesson in how, and how not, to use large language models in programming. Generating large blocks of code with LLMs can accelerate early development, but maintaining and evolving that code later can become difficult. In practice, we found LLMs most useful for small, isolated snippets while keeping overall architectural control in human hands.

Conclusion

This demo isn’t a product, and it wasn’t meant to be. But it does show what we’re good at: turning complex ideas into working software.

In this case, that meant turning a research-level roadmap algorithm into a working multi-agent exploration system.

If you’re working on an R&D project involving simulation, planning logic, or algorithm-heavy systems, and you’re a few steps away from something that works, we might be able to help.

We like tricky problems and algorithmic wizardry, and we aim for the most elegant solution. We use AI when it’s clearly the best option, but more often than not we rely on good old-fashioned craftsmanship to achieve results we can proudly stand behind.

We are not conjurers of cheap tricks. We build the algorithms that make systems work.

We also made a video of the demo: Autonomous Mapping with a Drone Swarm and Supervisor System